The Earliest Warning Sign: How Measuring Readiness at Training Predicts Trial Risk Before It Shows Up in the Data

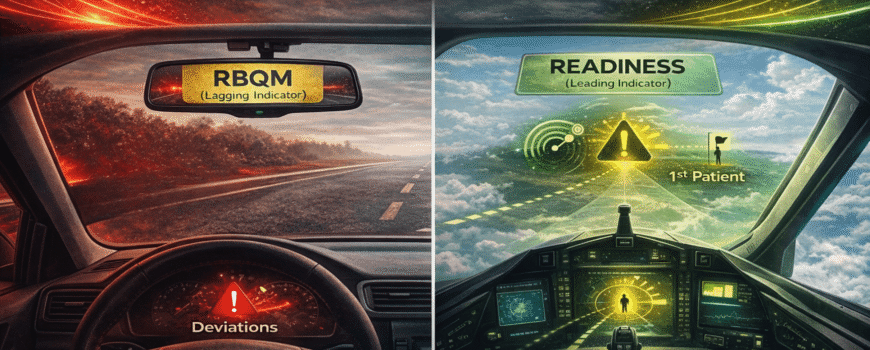

Most protocol deviations start as human problems, not operational ones, but risk-based monitoring typically relies on lagging indicators that arrive too late to prevent these problems instead of measuring readiness during training, when intervention still matters.

Volume 35, Issue 2: 4-01-2026

“Readiness, measured during training at site start-up, is one of the few leading indicators that is both measurable and actionable with meaningful lead time. Used well, readiness does not simply inform monitoring intensity; it helps shape how quality is built into site activation and early execution.”

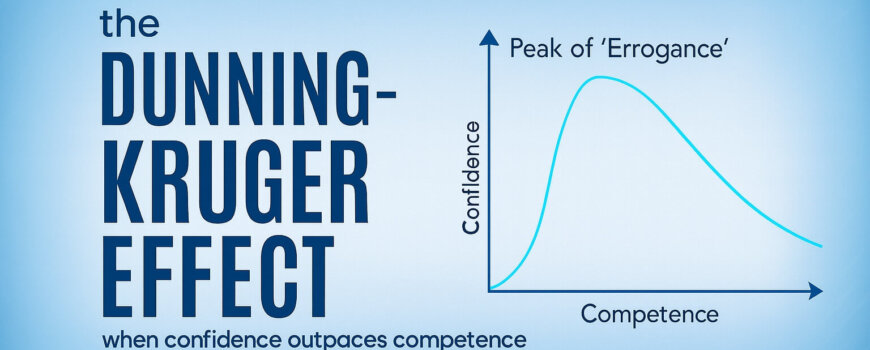

Most problems in clinical trials don’t begin as operational problems. They begin as human problems. A protocol deviation rarely starts with defiance. It starts with ambiguity, a rushed choice, a misunderstood step, an assumption that feels reasonable or confidence that has outpaced competence. Those moments compound. And by the time the numbers signal trouble, the real cause is often weeks or months old.

This is an uncomfortable truth for a field that loves dashboards. We measure what is visible, what is countable, what fits neatly into a report. But the roots of human performance are not always immediately visible, and are rarely neat. People manage complexity with mental shortcuts. They conserve effort under pressure. They overestimate what they understand when things feel familiar. They default to habit when workflows are crowded. None of this is a character flaw. It’s the human condition, how our brains cope with too much information and too little time. We’ve explored many of these patterns in our prior 19 Applied Clinical Trials columns.

Given these lessons, we now know that many of the most critical risks in clinical research are (thankfully) often predictable. Not because we can forecast every operational twist, but because the cognitive and behavioral conditions that produce these risks are remarkably consistent. The real question is whether we choose to measure early enough, when we can still do something about it, or later, when the best we can do is document and react.

Risk-based monitoring’s blind spot: Signals that arrive too late

Risk-based monitoring (RBM) was supposed to be the industry’s answer to this dilemma. Focus on what matters most, reduce waste, and use signals to guide attention. In principle, RBM is a behavioral strategy: It shapes the system, so people invest effort where it produces the greatest return. In practice, though, many RBM programs still function like rearview mirrors.

They rely on lagging indicators, metrics that confirm trouble after it has already arrived: rising query volumes, delayed data entry, accumulating eligibility or dosing deviations, safety reporting delays or recurring documentation findings identified during monitoring visits. Eligibility deviations, for example, may only surface once monitors review source documents and find that multiple subjects were enrolled under slightly different interpretations of the same inclusion criterion. These measures are valuable, but they are lagging and descriptive. They tell you what happened.

Leading indicators do something different. They do not measure outcomes. They measure the conditions that make outcomes likely. They provide early signals that enable prevention rather than the costly cleanup. And prevention matters because in clinical trials, time is not a luxury. It is the currency.

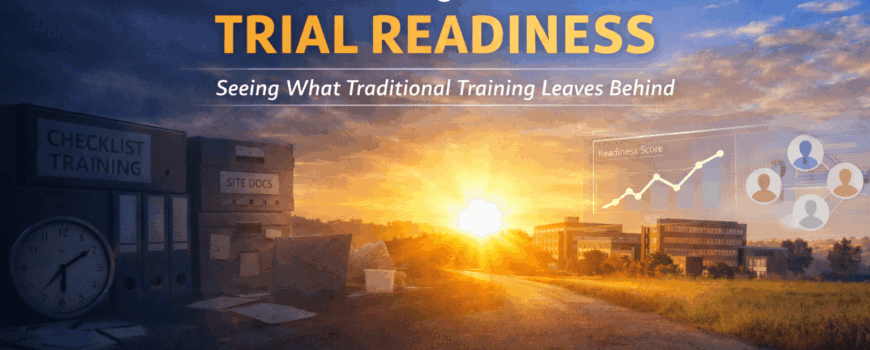

This brings us to a curious blind spot. One of the most valuable windows into human performance appears before the first patient is enrolled: during site start-up and training. Yet we often treat training as a simple administrative “to-do” item. Content is delivered. Attendance is recorded. Completion is tracked. A box is checked. Everyone feels a brief relief that something is “done,” even though little has been achieved beyond compliance.

Our brains love this kind of closure. Completion feels like progress. A certificate feels like competence. Familiarity feels like mastery. We confuse exposure with ability. We mistake recognition for recall and recall for performance. We see a passing quiz score and assume people can execute under pressure. We assume that because someone nodded through training, they will perform flawlessly when the first subject arrives, the clinic is busy and their judgment matters more than memory. That gap often surfaces during the first real patient interaction, when the site must interpret the protocol without slides or instructors. It shows up when the site calls the CRA during its first screening visit to confirm basic eligibility criteria.

If the only “signal” we capture at training is completion, then RBM has no choice but to wait for lagging indicators to emerge. The result is a system designed to detect consequences rather than the conditions that produce them.

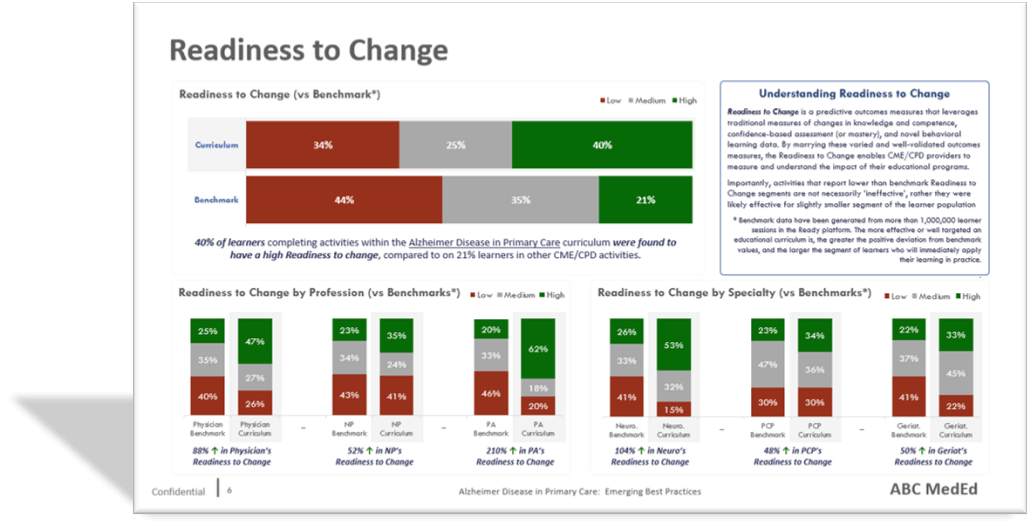

Readiness: A leading indicator with a runway

Readiness changes that. Readiness can be measured during training in ways that reflect real performance, not exposure. It captures what people know, what they can do and whether their confidence is calibrated to competence. It can also include mindset signals that predict execution when things get messy: curiosity, self-regulation, intention, grit and reflection. These aren’t “soft traits.” They predict whether someone will seek clarity, monitor their own understanding and persist through inevitable friction. In clinical research, those behaviors are often the difference between a minor hiccup and a downstream cascade. Sites that struggle to demonstrate mastery and readiness during training are predictably the same sites that generate early clarification emails, dosing questions and preventable protocol deviations.

The simplest point is the most powerful: If readiness is measurable during training, it’s a leading indicator of risk. It exists weeks or months before enrollment. That timing matters because it creates what trials rarely have: a runway for response. Intervening here can mean the difference between weeks of corrective monitoring versus a smooth first patient in.

Lagging indicators tell you that a site is producing deviations. Leading indicators tell you that a site is likely to produce deviations unless you intervene. Those two statements are worlds apart. The first triggers monitoring and correction. The second enables prevention. And prevention is the true promise of RBM.

Once you embrace readiness as a legitimate risk signal, the operational implications become straightforward. The readiness distribution across sites becomes a risk map before enrollment begins. Instead of reacting to early deviations, trial teams can prioritize support for the sites most likely to struggle with their first subjects. High-readiness sites become opportunities for early momentum, faster activation and a cleaner first patient experience. Mid-readiness sites become targets for precise, time-limited support that prevents predictable mistakes. Low-readiness sites become candidates for remediation before activation, or for activation with heavier enablement and tighter early oversight. The goal is not to label sites. The goal is to allocate resources more efficiently, when they still matter.

This also reframes an uncomfortable reality that experienced trial teams know but rarely say plainly: Not all sites are equally ready post-activation, and treating them as if they are is not “fair” — it is inefficient. Fairness in clinical research should mean giving each site what it needs to succeed, not giving each site the same level of attention regardless of risk. Readiness allows you to do that ethically and effectively because it shifts the decision from opinion to evidence.

There is a deeper benefit here, one that sits squarely in the human factors theme of this series. Readiness doesn’t just predict errors; it predicts how people respond to uncertainty. When readiness is low, the brain compensates in predictable ways: It fills gaps with assumptions, over-relies on habit, avoids effortful reasoning, delays asking questions and mistakes familiarity for understanding. Those are default behaviors under cognitive load. Measuring readiness early is one way to measure cognitive load before it expresses itself as operational noise.

If we want RBM to be more than smarter surveillance, we should stop waiting for the trial to tell us it is struggling. By the time problems surface in monitoring metrics, variability in protocol execution or documentation may already be taking hold. We should look earlier, when the human factors are signaling risk in plain sight. Training completion is a bureaucratic milestone. Readiness is a prediction, and it is one of the earliest measurable indicators of how consistently a site is likely to execute the protocol once real patients and real time pressures enter the system. If the goal is to optimize trial performance, prediction beats documentation every time.

RBM is a step in the right direction. It improves how we detect and respond to emerging risk. But its broader goal is to design trials so fewer risks emerge in the first place. RBM remains reactive if its inputs are mostly lagging. Readiness, measured during training at site start-up, is one of the few leading indicators that is both measurable and actionable with meaningful lead time. Used well, readiness does not simply inform monitoring intensity; it helps shape how quality is built into site activation and early execution. It turns a check-the-box requirement into an early warning system, and it gives trial teams the advantage dashboards rarely provide: time to act before risk becomes reality.

Brian S. McGowan, PhD, FACEHP, is chief learning officer and co-founder of ArcheMedX, Inc.; Kelly Ritch is chief operating officer of ArcheMedX, Inc.